PyTorch-Direct: Introducing Deep Learning Framework with GPU-Centric Data Access for Faster Large GNN Training | NVIDIA On-Demand

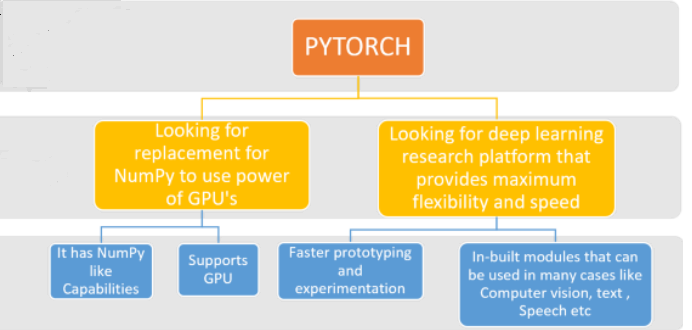

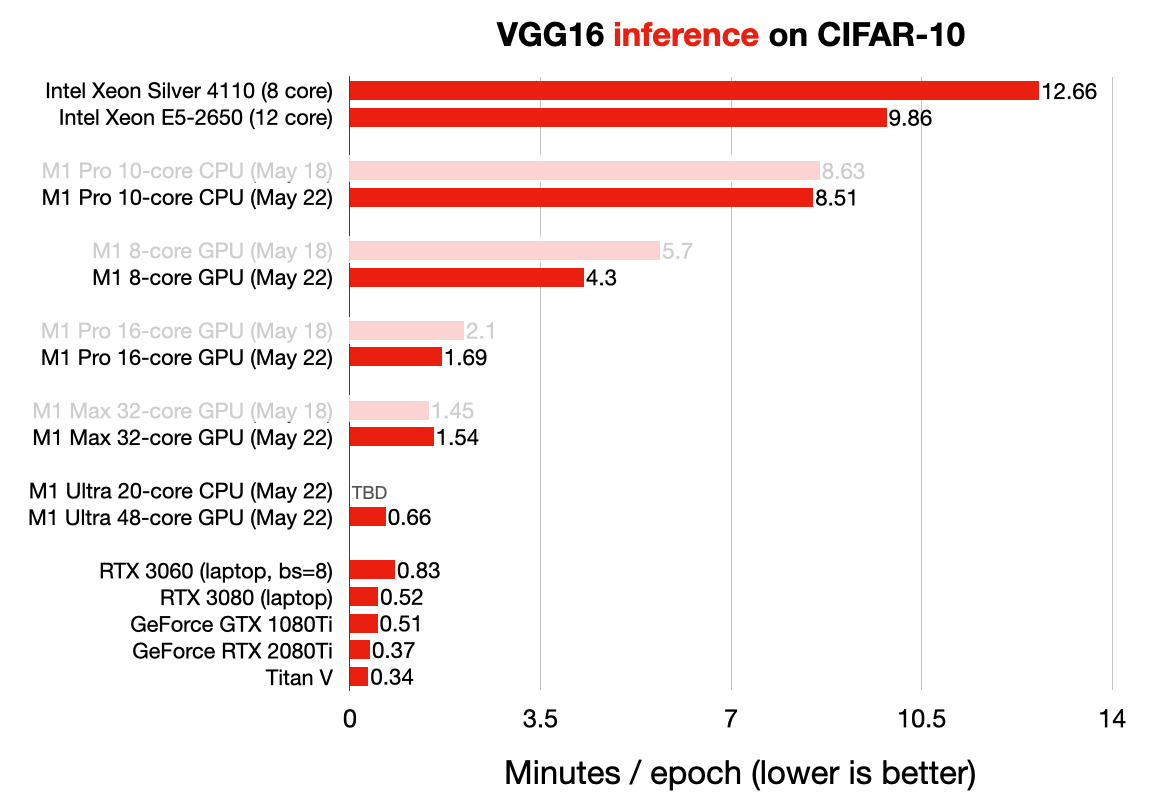

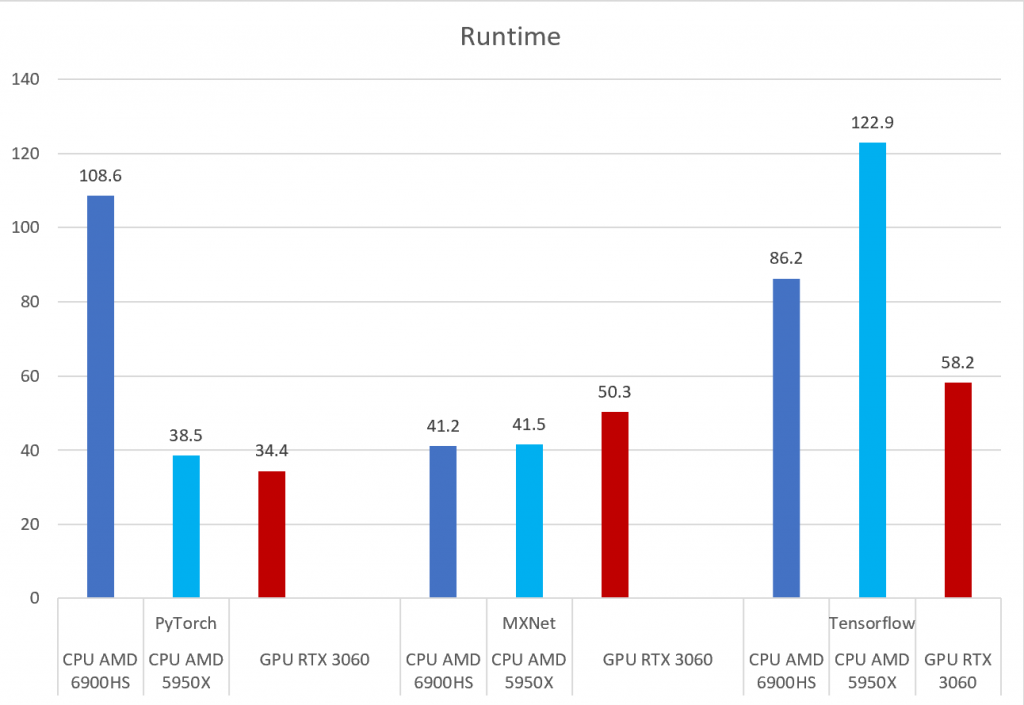

PyTorch, Tensorflow, and MXNet on GPU in the same environment and GPU vs CPU performance – Syllepsis

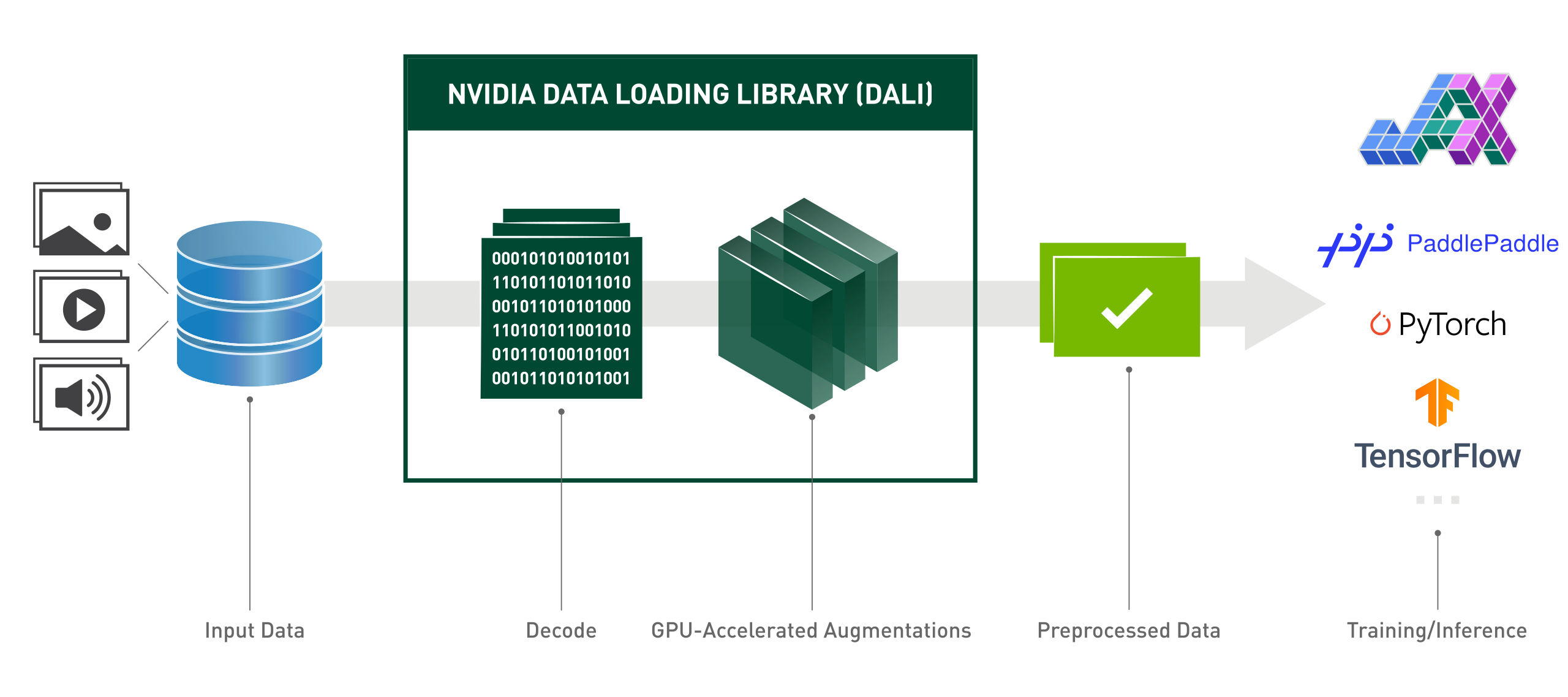

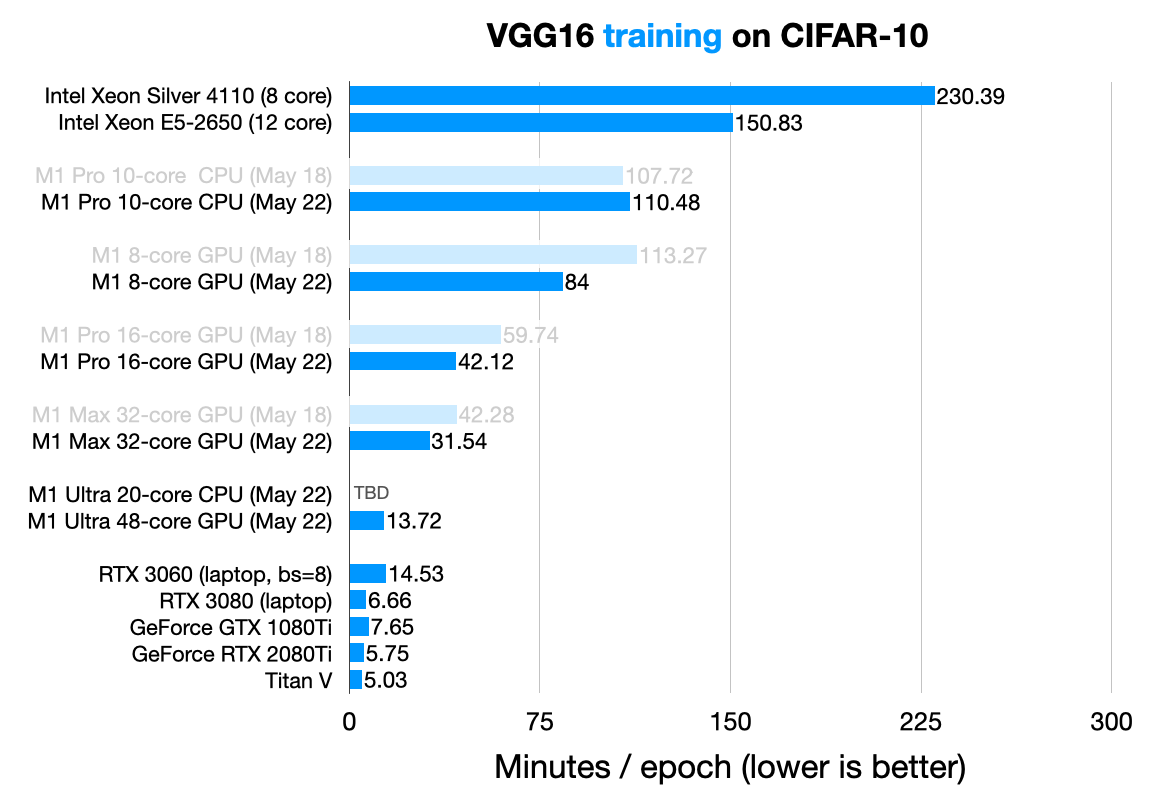

Accelerate computer vision training using GPU preprocessing with NVIDIA DALI on Amazon SageMaker | AWS Machine Learning Blog

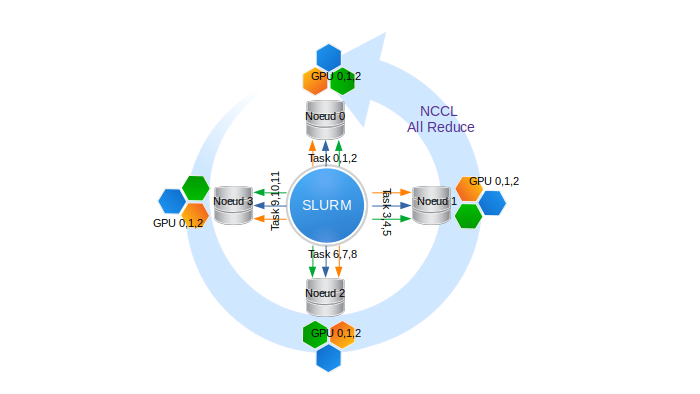

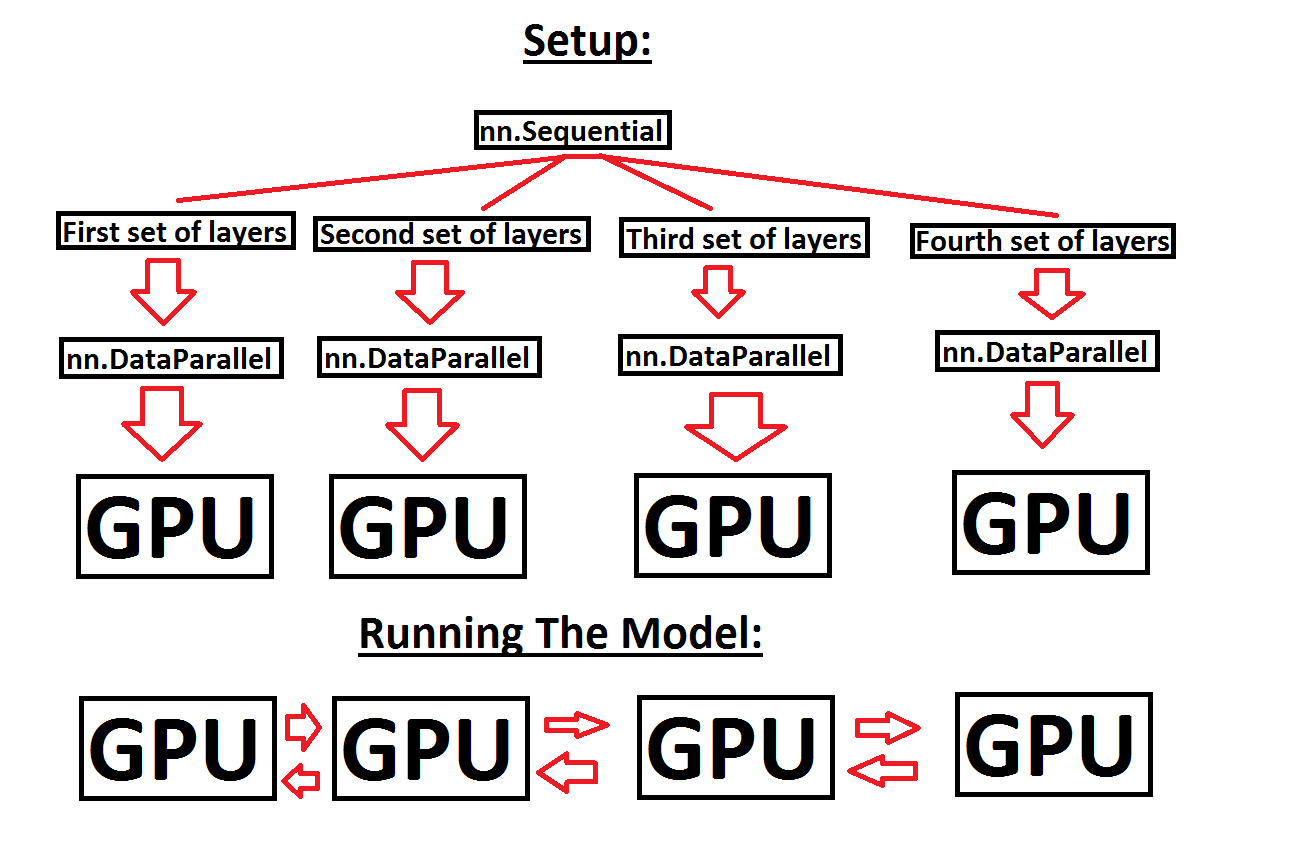

Help with running a sequential model across multiple GPUs, in order to make use of more GPU memory - PyTorch Forums

PyTorch MNIST example spawns multiple processes in the same GPU · Issue #287 · horovod/horovod · GitHub

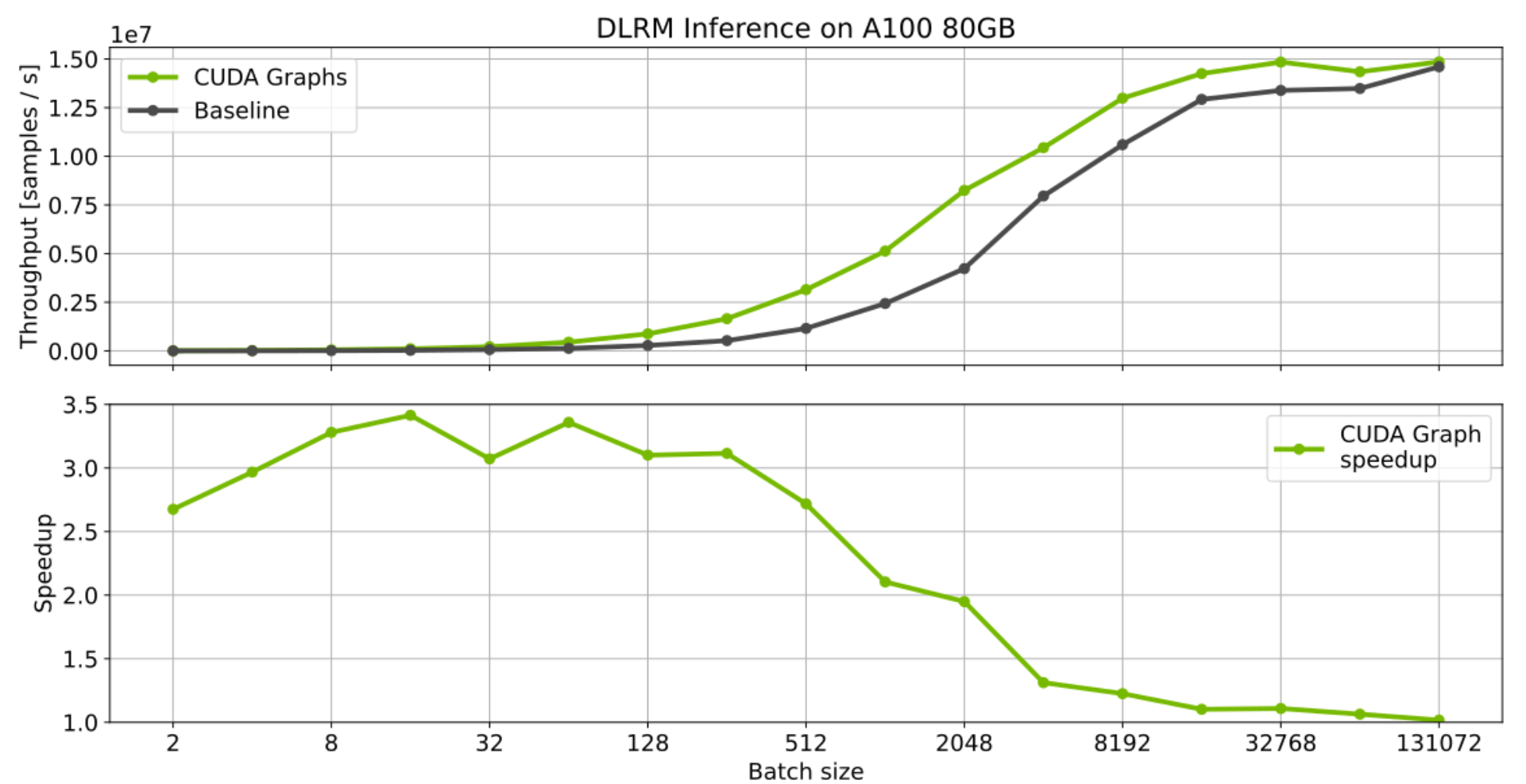

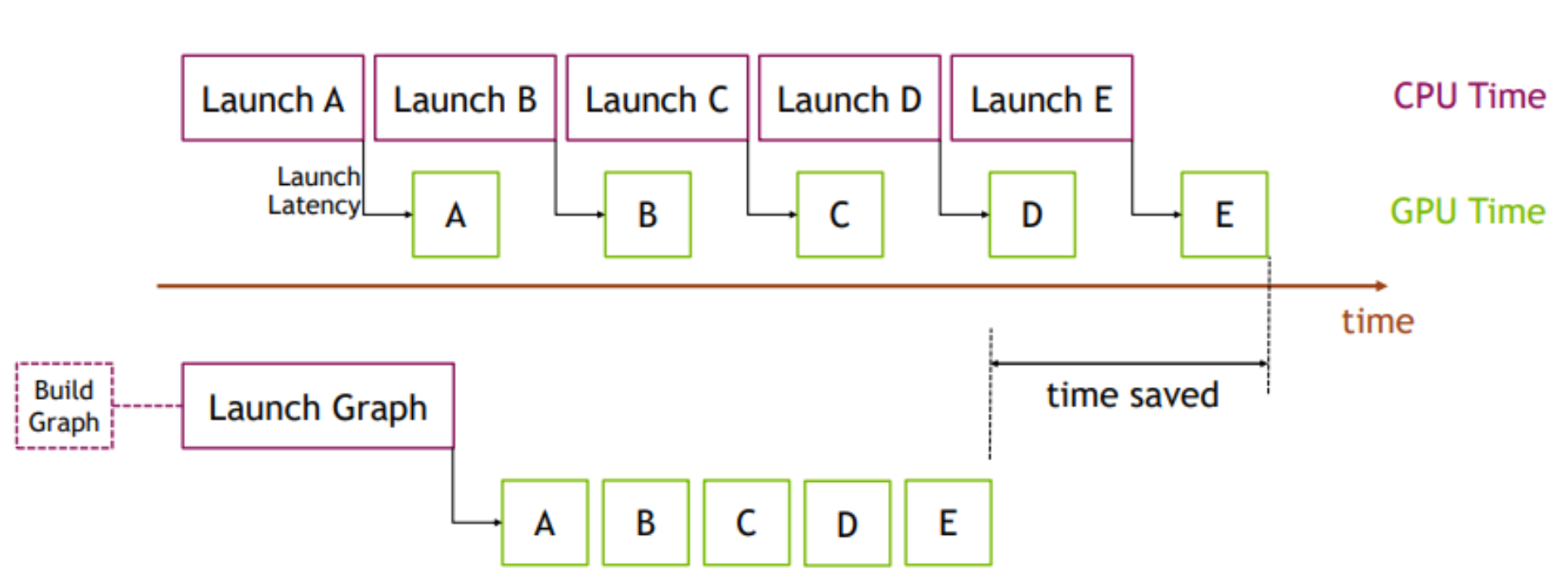

CPU execution/dispatch time dominates and slows down small TorchScript GPU models · Issue #72746 · pytorch/pytorch · GitHub